Privacy-first authenticity checks for insurer invoices and reports

Oct 2, 2025

- Team VAARHAFT

(AI generated)

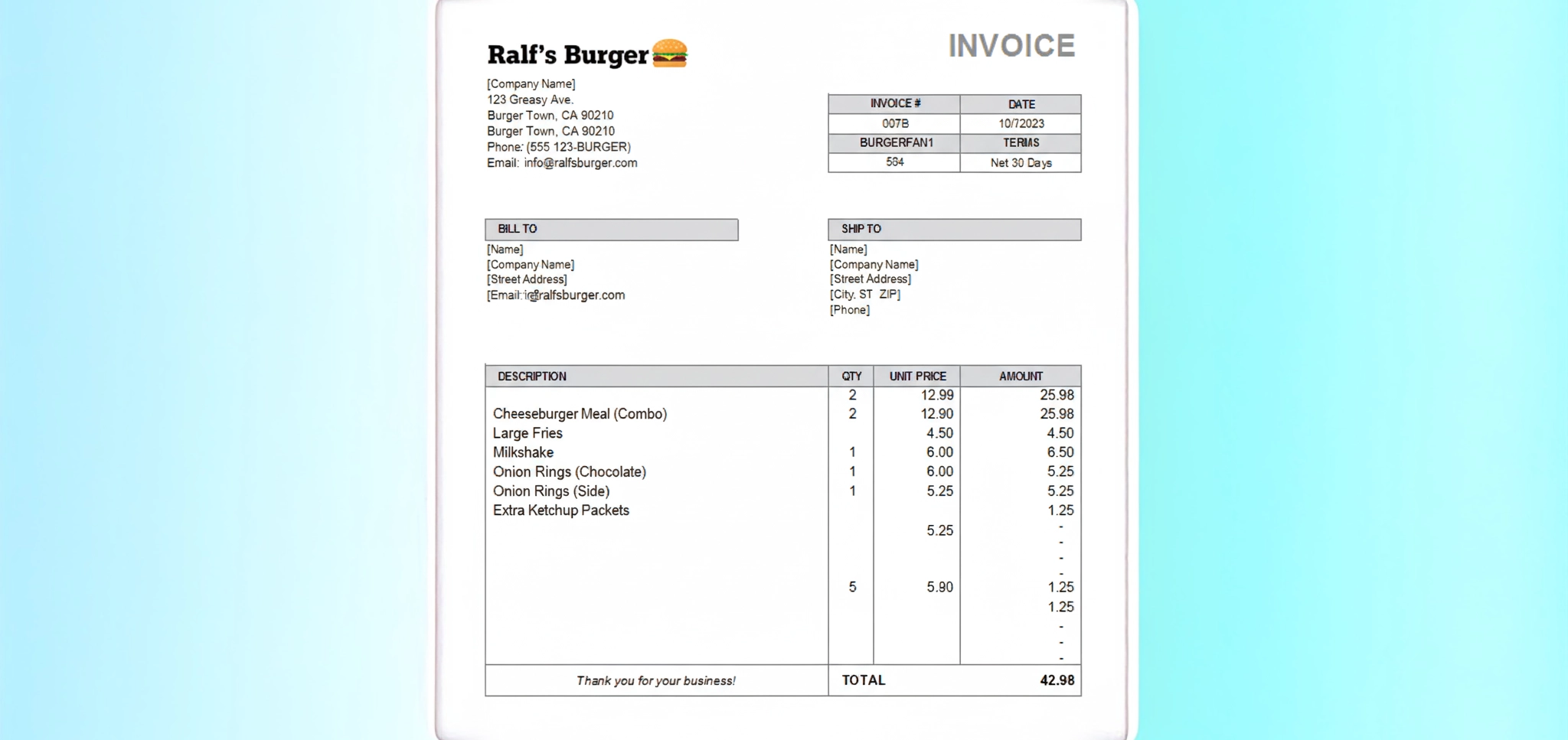

A car image that looks convincingly dented. A repair invoice with the right logos and totals. A certification PDF that passes a cursory glance. Fraudsters exploit AI and easy editing tools to slip such assets into claims and underwriting. In May 2024, UK insurers warned about a surge of manipulated damage photos used to inflate or fabricate claims, with some carriers reporting triple-digit growth in shallowfake edits across a single year (The Guardian). That context sets up the essential question for every claims and risk leader today: How do insurers verify the authenticity of repair invoices, expert reports, and certificates under strict data protection regulations?

This article outlines a pragmatic, privacy-first way insurers can verify authenticity of repair invoices, expert reports and certificates while respecting GDPR and sector rules. It blends practice-tested steps, regulatory touchpoints and emerging standards such as Content Credentials and verifiable credentials. The goal is simple: reliable verification without over-collecting personal data.

Why this matters now

Insurers have digitised claims and remote inspections at speed. That shift improved customer experience, yet it also expanded the attack surface for synthetic media and convincingly edited documents. Industry supervisors track this digitalisation trend and its risk implications for the European market, including AI adoption and the need for robust controls. Parallel to that, the EU’s AI Act is moving into staged application, creating obligations around transparency and governance for certain AI uses in the next two years. These forces converge on claims and SIU leaders who must verify repair invoice authenticity, detect manipulated expert reports and confirm certificate provenance without storing more data than necessary.

The challenge in practice: threats, evidence types and what must be verified

Verification spans multiple artefacts. First, scanned invoices or native PDFs with embedded fonts and signatures. Second, expert reports or appraisals, sometimes exported from niche tools. Third, certificates for parts, repairs or compliance. Fourth, photos and short videos captured at first notice of loss. Each class carries distinct manipulation vectors, from plain copy and paste and cloned stamps to AI-generated text and images that mimic house styles and seals. Deepfake generation keeps improving while detectors face generalisation and robustness challenges in the wild, as synthesised media diversify and editing pipelines get cleaner.

- Primary risk vectors to triage quickly: reused or duplicate media across multiple claims, inconsistencies in metadata and timestamps, localised edits on stamps or totals, and assets that never passed through a trusted capture path.

- Operational friction to manage: verification must be explainable to adjusters and auditable for regulators while remaining fast enough to avoid payout delays.

- Privacy constraints to respect: data minimisation, storage limitation and purpose limitation must hold across automated checks and manual escalations.

A practical verification framework for insurers

Below is a four-step workflow that aligns with the core question of how insurers verify the authenticity of repair invoices, expert reports, and certificates under strict data protection regulations. It favours progressive disclosure and uses provenance signals when available.

- Lightweight triage. Run fast metadata checks on images and PDFs, detect duplicates via privacy-preserving fingerprints and flag obvious anomalies such as mismatched capture dates or device fields. Duplicate detection across portfolios helps catch recycled damage photos and reused certificates without storing the original media.

- Automated forensics with explainability. Apply image and document forensics that identify AI generation or editing and visualise suspicious regions at pixel level. Explainable overlays support adjusters and SIU in communicating decisions and documenting case files for later review.

- Provenance and content credentials. Where available, validate C2PA Content Credentials and similar provenance manifests to understand who created the asset, which tools touched it and whether the chain of custody remains intact. For a deeper dive into capabilities and gaps of the standard, see this analysis of C2PA’s strengths and limitations (Vaarhaft).

- Trusted re-capture when doubt remains. If a file is flagged or lacks provenance, invite the claimant to re-capture verification photos in a controlled, tamper-evident flow that detects screen re-photography or flat 2D spoofs and attaches fresh integrity signals.

- Selective disclosure for sensitive facts. Use privacy-preserving patterns to confirm specific claims, such as whether a certification is valid for a VIN and date range, without ingesting the full identity dataset.

Insurers often operationalise Steps 2 to 4 with privacy-first tooling. For instance, a forensic scanner that runs in seconds, deletes media after analysis and returns an audit-ready PDF can minimise handoffs while keeping adjusters in the loop. When a scan flags an asset as dubious, a web-based trusted capture flow can re-collect evidence without app installs, closing the loop in minutes. Explore how the Vaarhaft Fraud Scanner, a document-focused forensic scan API, fits into such a workflow here.

Legal, compliance and privacy constraints

A robust answer must anchor verification in GDPR principles. The lawful basis typically sits in contract performance or legitimate interests with appropriate balancing tests. Data minimisation argues for layered checks that verify only what is necessary at each step. Storage limitation points to short-lived processing with immediate deletion once a decision is made. High-risk deployments of AI components may require a data protection impact assessment and clear human oversight.

Two operational safeguards help teams stay compliant. First, keep audit trails that record verification actions and decisions without retaining the underlying personal data longer than needed. Second, favour verification methods that provide a yes or no conclusion about authenticity or validity while revealing as little personal information as possible.

Technology and process patterns that work, and limits to plan for

Hybrid verification chains perform best. Automated detectors catch many low-effort edits and obvious AI traces. Explainable overlays guide adjusters to scrutinise totals, seals and stamp areas in invoices or the boundary of damage regions in photos. Human review remains vital for edge cases, especially where generative models produce high quality imagery that evades single-signal detectors..

Content provenance is gaining traction but still uneven across devices and platforms. C2PA Content Credentials can attach cryptographically signed metadata that records origin and edits. Some platforms have piloted authenticity labels and industry coalitions keep growing, yet adoption remains partial and inconsistent across smartphones and social networks, as recent reporting notes. Insurers should treat provenance as a strong positive signal when present, not a mandatory prerequisite.

A straightforward improvement is to introduce trusted re-capture for doubtful cases. A web-based camera flow that blocks screen re-photography and verifies that a real three-dimensional scene is being recorded gives claims teams a clean baseline to compare against earlier uploads. If your triage and forensics flag an invoice or photo, a secure re-capture request resolves most disputes quickly and with less personal data. See how the Vaarhaft SafeCam verification capture flow slots into claims without forcing app installs.

Conclusion and next steps

The fastest path to reliable verification balances automation, provenance and privacy. Start with lightweight triage, add explainable image and document forensics, validate content credentials when available and resolve edge cases with trusted re-capture. Keep audit trails while minimising retention. Align AI components with GDPR and the AI Act’s trajectory. That is how insurers answer the question in full: Wie prüfen Versicherer die Echtheit von Reparaturrechnungen, Gutachten und Zertifikaten unter strengen Datenschutzauflagen?

If your team wants to see how privacy-first document and image forensics, plus secure re-capture, look in a live claims flow, our specialists can walk through representative cases and discuss integration options that match your governance model. For background on AI-generated document fraud tactics and their implications for claims, you can also explore complementary reading from our analysts here.

.png)