Real-Time Document Authenticity Check: Stop Synthetic Receipts and Forged Proofs at Upload

Sep 24, 2025

- Team VAARHAFT

(AI generated)

Decision-makers across expense, insurance, fintech, HR, and marketplace workflows are confronting the same reality: convincing, AI-generated paperwork now slips past template rules, OCR, and quick visual reviews. The challenge is no longer whether you can spot a fake eventually, but whether you can prevent it from entering your flow in the first place—without punishing legitimate users.

This article defines what a real-time document authenticity check actually is, why it’s a now-problem rather than a later-problem, how modern systems do it without wrecking UX, where provenance metadata helps and where it breaks, and how to measure impact in ways executives and engineers both trust.

Why “real-time authenticity” became a CEO-level problem

Generative models have collapsed the cost of forgery. In spring 2025, multiple outlets showed how GPT-4o-class tools can produce photorealistic receipts that look like quick iPhone snapshots—wrinkles, stains, plausible typography, and consistent arithmetic included. That matters because a huge number of reimbursement and payout flows still accept “a photo of a document” as proof. See, for example, TechCrunch’s coverage of ChatGPT’s image generator forging receipts, which summarizes why so much real-world verification is suddenly brittle ( TechCrunch ).

The macro picture is moving the same way. The FBI’s Internet Crime Complaint Center logged 859,532 complaints in 2024 and reported losses exceeding $16 billion, a 33% jump from 2023—exactly the sort of environment where cheap, scalable fakery thrives. The Bureau has also warned that criminals now use generative AI to boost believability and scale. When the top line looks like that, pushing authenticity checks to the front of the funnel stops being optional ( IC3 2024 Report PDF ).

What authenticity really means (and what it doesn’t)

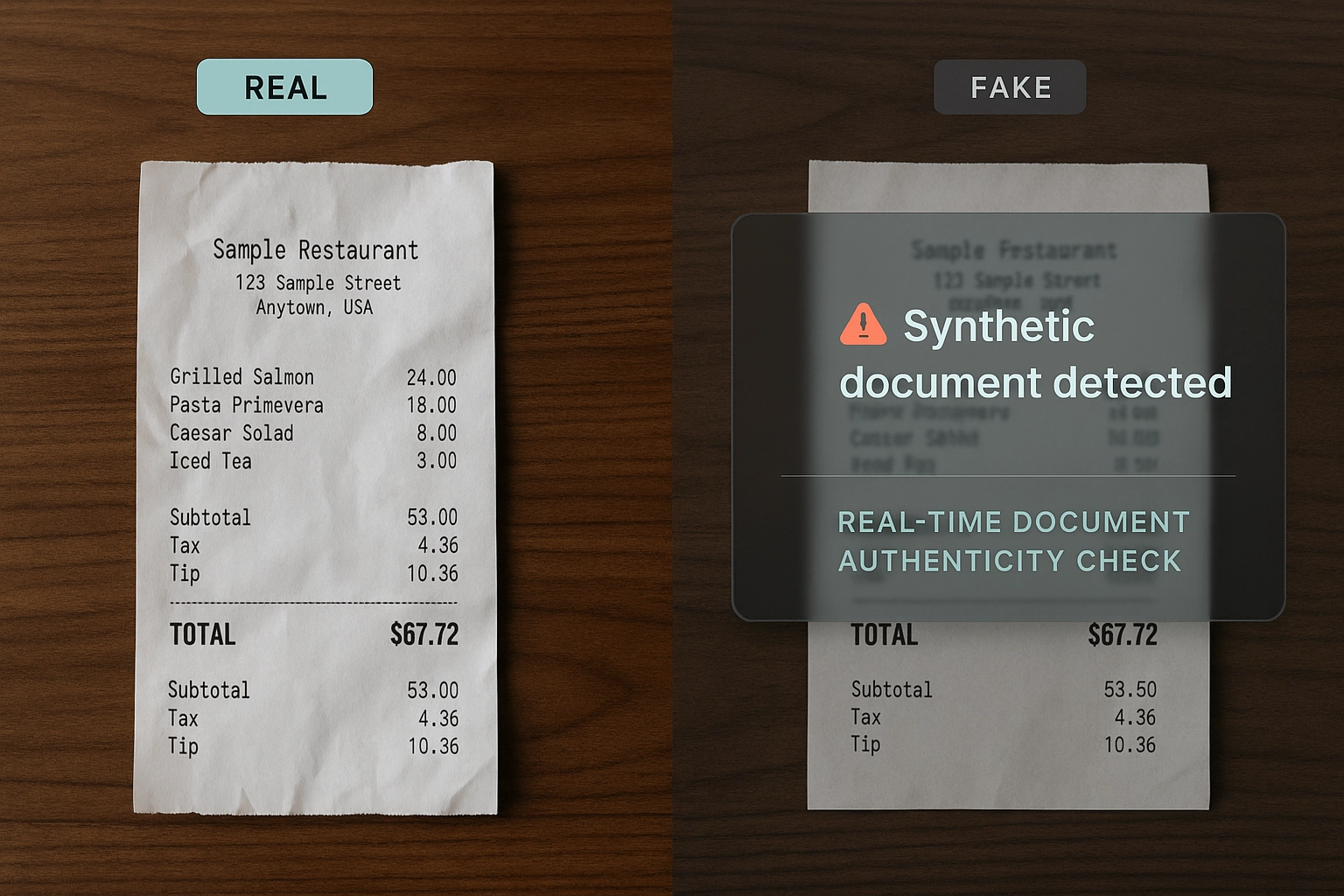

Authenticity is not the same thing as “the numbers add up” or “the text is readable.” You are not validating whether a dinner should be reimbursed or a discount should have applied. You are determining whether the submitted image is a genuine capture of a real-world artifact rather than a synthetic render, an edited composite, or a screen photo of a PDF. That distinction matters, because modern generators can reliably produce aligned text, neat logos, and arithmetic that balances—exactly the details older image models fumbled. The GPT-4o receipt demonstrations made this point brutally clear ( TechCrunch ).

The most common 2025 patterns are now familiar to anyone running a trust queue: fully AI-generated receipts and invoices that look like phone photos; documents that were real at some point but have been edited to change a date, a sum, a line item, or a beneficiary; “picture-of-a-picture” submissions where a screenshot or a monitor photo is used to erase provenance; and contextual misuse, where a legitimate bill is used to support the wrong claim. Each of these attacks targets the intake moment, not the back office, which is why a real-time authenticity check needs to make a fast, defensible call at upload.

Where provenance metadata helps—and where it breaks

Provenance standards such as the Coalition for Content Provenance and Authenticity (C2PA) are valuable because they embed cryptographically signed records of capture and edits directly into a file. Adoption is growing across tooling and even hardware. Leica’s M11-P can sign Content Credentials at the moment of capture, and creative software ecosystems like Adobe’s have leaned into the standard. OpenAI adds Content Credentials to images produced within ChatGPT and its API, improving verifiability in controlled pipelines ( Content Authenticity Initiative ).

But credentials are not a panacea. In the real world, they are frequently stripped—sometimes by design, sometimes by accident. Many platforms remove metadata on upload, and a simple screenshot will not preserve the original credential chain. Even advocates highlight that screenshots and re-recordings effectively remove secure metadata; you cannot assume that “no credential” means “not authentic,” nor can you assume the reverse. Treat credentials as strong hints, not as the basis of decisioning. Read more about C2PA and its limitations here .

The anatomy of a real-time document authenticity check

A credible system blends several independent checks and returns a single decision quickly enough to feel invisible in the UI. Pixel-level forensics probe how the image was produced, rather than what the text says. Synthetic renders tend to leave telltale traces in texture and frequency space, in local noise statistics, in the way edges and shadows behave, and in how compression was applied. Edits often reveal themselves through resampling artifacts, patch seams from copy-move operations, inpainting residuals, or mismatched compression blocks. These cues are not hand-written rules anymore; they are learned models that compare what they see to what the system has learned to expect from real cameras and scanners.

Structure analysis asks whether the document behaves like a captured artifact. Are baselines and letterforms consistent with the alleged brand? Do logos align across the grid the way they would in a pre-printed form? Does the “photo” cast plausible shadows and exhibit the tiny deviations you get when paper curls, or does it look like a perfectly flat raster? These are small questions, but when answered together they create a strong signal.

Metadata parsing still belongs in the stack. If EXIF, XMP, or a C2PA manifest is present, your system should read and validate it, and it should preserve and surface that information later for audit and customer communications. The important constraint is to avoid a hard dependency: credentials are helpful context, not ground truth, because they are often missing for reasons unrelated to fraud ( C2PA spec ).

Duplicate and near-duplicate detection closes a different loop. Fraudsters recycle successful fakes. Perceptual hashing and robust embeddings make it possible to catch repeated receipts and documents across users and even across tenants, without exposing personal data.

Finally, there is source assurance for cases where risk is high or signals conflict. The most effective approach is a short, secure live-capture step that confirms the user is photographing a real scene and not a monitor, another phone, or a printed screenshot. VAARHAFT SafeCam does this as a web app, so there is no install step. It blocks picture-of-a-picture attempts in real time and returns a verified capture straight into the flow. This closes the “how do we trust the source” gap that metadata can’t address.

Making it feel invisible: UX and performance

End users should not feel like they are being interrogated. In practice that means you return an immediate pass or block in the vast majority of cases and reserve escalation for a small, well-defined minority. Sub-second decisions are achievable for clear outcomes. When you do escalate, the prompt must be respectful and specific: explain that a quick live photo is needed to confirm a real-world document and that the capture happens in the browser, without installing anything. Communicate decisions back to the user and to support teams with terse, human-readable justifications.

From an engineering perspective, an API-first approach keeps the integration surface simple. Intake calls return a decision and a compact set of indicators your UI can render. Heavy enrichment can proceed asynchronously via webhooks; the front end does not have to wait. Errors should be predictable, rate-limited, and observable. If you are operating across products or markets, your policy engine needs the flexibility to raise thresholds for high-value submissions while keeping friction minimal elsewhere.

Sector examples that actually change the numbers

Expense management is the most straightforward place to see impact. Authenticity checks run at upload and auto-approve clean, low-risk receipts while blocking clear fakes. That reduces reimbursement leakage and eliminates back-and-forth with employees. Because ambiguous cases are rare and drive into a small review bucket with evidence summaries, analysts spend seconds, not minutes, on each decision.

Insurance claims often pair a damage photo with a repair invoice. Running pixel-forensics on the photo and document-forensics on the invoice, then cross-checking both against duplicate databases, catches staged scenes and recycled paperwork. For higher-value claims or when picture-of-a-picture signals fire, a live-capture step closes the loop before the payout.

Fintech and KYC flows use the same toolbox for payslips and account statements. Layout coherence and pixel cues identify synthetic or edited proofs, while escalation to web-based live capture confirms you are dealing with a physical document rather than a desktop screenshot. HR onboarding and marketplaces adapt the pattern to credentials, listings, and product shots, removing the incentive to stage immaculate, metadata-less images.

Compliance and standards without the wishful thinking

The EU AI Act is phasing in transparency-related obligations for certain AI systems and for synthetic content, including marking outputs so they are machine-readable and, in some contexts, visibly labeled. That shift is welcome—especially for enterprises that need to document how they handle AI-touched media—but it does not remove the need for forensic checks at intake because many sources in your pipeline will continue to arrive without reliable provenance. Design for user clarity, preserve and surface credentials when present, and maintain auditable decision logs.

In the provenance space, keep leaning into Content Credentials, both to verify trustworthy assets and to educate customers. At the same time, internalize the limitations. Screenshots generally do not carry the original credential chain, and many platforms still strip metadata. The result is an ecosystem where the absence of a credential is ambiguous. That’s exactly why authenticity checks must live at the point of entry rather than in a post-hoc review queue.

Metrics that matter to executives and engineers

Measure the percentage of submissions you block with high confidence, the size and precision of your review bucket, and the time it takes to reach a decision on ambiguous cases. Track the reduction in manual reviews, chargebacks, bogus payouts, and escalations. Pair those with experience metrics—submission time and approval speed for legitimate users—so you can tune thresholds without eroding trust. The FBI’s loss numbers make a compelling backdrop when you explain why latency, precision, and false-positive control are worth the engineering investment.

A reference architecture that doesn’t fight your stack

Most deployments follow the same arc. Your gateway or front end hands off uploads to an authenticity API that returns a decision and a compact explanation. A policy layer enforces product-specific rules, such as stricter thresholds for high-value items or repeat submitters. An observability tier logs structured evidence for audits and for model and threshold tuning. If you operate a trust team, a lean review console displays the same signals the model used so analysts can move quickly and consistently.

How VAARHAFT implements this in practice

VAARHAFT’s Fraud Scanner exposes document authenticity as a simple API. It detects AI-generated and AI-edited documents at the pixel level and through document-structure analysis, parses metadata and Content Credentials when present, and performs duplicate and near-duplicate checks. The service returns an actionable decision with indicators you can surface in your product. It’s designed to integrate cleanly into existing workflows so you can place the decision at intake rather than in a manual queue.

For cases where risk is elevated or signals disagree, SafeCam adds source assurance. It is a secure, browser-based camera flow that verifies you are capturing a real scene and blocks picture-of-a-picture attempts in real time. Because it runs on the web, there is nothing to install. Together, these two layers—fast, forensic intake and optional live capture—establish trust by design.

FAQ

- Isn’t OCR with templates enough?

No. OCR verifies what the text says. Authenticity verifies how the image came to be. Modern generators can render clean text and credible layouts; you need pixel-level and structural signals to catch synthesis and edits. The widely shared GPT-4o receipt examples showed how reliable those renderings have become. - How fast is “real-time”?

For good UX, target sub-second responses for clear pass or block, and keep escalations rare and respectful. Use asynchronous enrichment for heavy analysis without blocking the UI. - Will Content Credentials solve this once adoption spreads?

They help with provenance and audits, and you should read and preserve them. But credentials are often removed by platforms and screenshots, so absence is ambiguous. You still need forensic checks at intake. - Do we need a mobile app to do live capture?

No. SafeCam runs as a secure web app and is built to minimize friction while defeating picture-of-a-picture attacks. - Is this a legal requirement?

This article is not legal advice, but note that EU AI Act transparency duties are expanding, especially for providers and deployers dealing with synthetic media. Align your policy and user communications accordingly, and pair them with technical verification at intake.

Conclusion

Document fraud is no longer a boutique Photoshop problem. It is a cheap, scalable commodity powered by general-purpose AI, and it targets the moment you accept an image. A real-time document authenticity check—combining pixel-forensics, structural analysis, duplicate matching, and, when needed, live capture—moves your defense to the only place it consistently works: the point of entry.

VAARHAFT delivers this as an API-first stack. The Fraud Scanner verifies authenticity and flags manipulation and synthetic generation. SafeCam adds source assurance in the browser when risk is high. Together they keep good users fast and fraud slow. Explore how this looks in insurance and intake workflows on our blog and product pages . If you want to see it in your flow, request a walkthrough of the API and a SafeCam demo.

.png)